Recently we posted the first in a series of articles discussing the changes we undergo as we age and how those changes affect our interactions with products and interfaces. The first looked at changes our eyes undergo; now, we’ll examine the changes to our ears — and the rest of our auditory system — and what those changes mean for design.

The visual system dominates our interactions with everything around us — from products to people to environments. Yet the auditory system is our early warning system. We respond to alerts coming through our ears much faster than those through our eyes. When a storm siren sounds near you, you hear it right away. But how many times have you noticed the message light blinking on your phone, only to realize it’s been blinking for hours (or even days)?

I’ll admit that a storm siren versus the message light isn’t a fair comparison — a big noise versus a little light. Of course, you’re going to hear one and not see the other. But think back to the last time an ambulance or police cruiser zoomed by with lights flashing and siren sounding. Both are designed to be noticed, to make sure you see and hear that emergency responder, but which did you notice first?

It shouldn’t be surprising that we react to sounds faster. To process something with your eyes, that something needs to be in your field of view. To notice something, it has to at least be in the periphery of your vision. There are no such restrictions for the ears. Thinking about human evolution, effective warnings were more likely to come in through our ears than our eyes. By the time you saw the predator, it was probably too late to do much but fight for your life. But by paying attention to the sounds, you had a decent chance of avoiding that encounter and thus a better chance of surviving; so we evolved to react to sounds – particularly those that are unusual or out of place.

In product design, we take advantage of this. Your phone rings to tell you that you have a call. Your microwave dings to tell you your food is ready. And in healthcare, just about every piece of equipment beeps to indicate that something needs to be done. In fact, there are so many auditory alerts in those settings that they’ve become a serious concern called alarm fatigue, leading the Joint Commission to issue a sentinel event report. But while alarm fatigue is a major problem, it’s an issue for another article.

Design Guidelines for Aging Ears

So let’s get back to what happens to your ears (and the rest of the auditory system) as you age. As before, we’ll start with the design guidelines you typically see for older populations. Those pertaining to the auditory system are usually along these lines:

- Use frequencies below 2500 Hz.

- Minimize sources of noise.

- Use recorded (digitized) speech rather than synthetic speech.

- Use natural language interactions.

- Minimize the amount of information conveyed through the auditory system.

Again these are great recommendations. But why are they necessary?

How Ears Age

Much like the need for reading glasses, age-related hearing loss (called presbycusis) is one of those things that we simply expect with age. Similar to visual changes, age-related hearing loss starts much earlier than you think. By the time you are 18, you can no longer hear sounds you could hear when you were 8.

Children can hear frequencies as low as 20 Hertz (Hz) and as high as 20,000 Hz. By the time you are 18, you can’t hear sounds above 16,000 Hz. By 30, sounds above 15,000 Hz are lost. By 50, nothing above 12,000 Hz is heard. By 70, you can only hear up to about 6,000 Hz (of course, these are all averages; losses vary dramatically across individuals).

If you have a range of ages in your home or office, get everyone together and watch this video that demonstrates the loss of high frequencies (don’t worry about headphones unless you want to accurately test your own hearing). Ask each person to raise their hands when they can hear the tones. Our office ranges from 25 to over 55, so I think I’ll do this for our next Monday morning movie. If everyone is being honest, what you should see is people raising their hands in the order of youngest to oldest (you may also notice that women tend to raise their hands before men of the same age — we typically experience less hearing loss).

For the most part, we don’t notice our hearing losses because they are hard to detect until they become extreme. But there are some products that are designed to take advantage of these changes. Consider the Mosquito — a highly controversial electronic loitering deterrent — it emits high frequencies that can be heard by teens, but not by adults, encouraging the younger set to leave. On the flip side, “mosquito ringtones” are marketed to teens as ones that only they can hear – so they avoid getting busted by parents and teachers.

As with changes to the visual system, age-related hearing loss is also a cumulative effect. The best way to understand is to understand how your auditory system works.

The Auditory System Structures

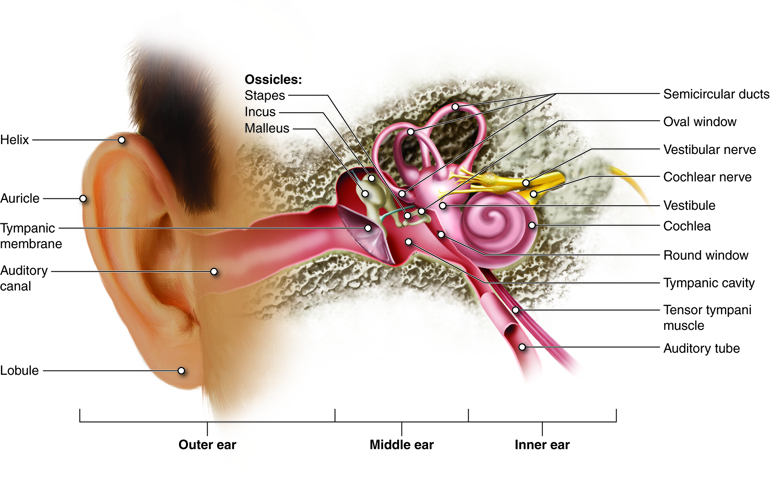

Your earlobe, also known as the pinna, captures sound waves and funnels them into your auditory canal. Your earlobe also amplifies sound, which is why cupping your hand around your ear works – it essentially makes your ear bigger. Not much changes with your earlobe to cause hearing loss. In fact, it’s one of the few body parts that continues to grow throughout your life. Perhaps this was evolution’s way of compensating for what happens in the middle and outer ears.

Once sounds are captured by the pinna, they travel down the auditory canal to the eardrum, or the tympanic membrane. That membrane is crucial, which is why they tell you to NEVER stick cotton swabs in your ear — you can easily damage it. You can also damage the auditory canal, which may impede the transmission of sound waves.

Why is the eardrum so vital? When sound waves hit the eardrum it vibrates. Connected to it (in your middle ear) are the three smallest bones in your body — the ossicles. Their sole purpose is to vibrate when your eardrum vibrates. When your eardrum vibrates, it sets the first ossicle in motion, which sets the next in motion, which sets the third in motion. Connected to the third ossicle is the vestibular window — another membrane — this one leading to the inner ear. The ossicles transmit sound from the outer ear to the inner ear and amplify sound.

These structures (eardrums, ossicles, and vestibular windows) are all affected by age. As you get older, the eardrum and the vestibular window become less flexible, and the ossicles become stiffer. These changes reduce your sensitivity to sounds because they reduce sound amplification and transmission. Likewise, if you damage your eardrum (e.g. by poking it with a cotton swab) you reduce its ability to vibrate and transmit sounds.

However, most of the changes that occur with age happen in the inner ear. Connected to the vestibular window is the cochlea, the most complicated structure in the ear. Simply put, when the vestibular window moves it causes a wave—like ripples on water—to occur along a surface that stretches the length of the cochlea (the basilar membrane).

On that surface are the hair cells that you may have heard of, which send electrical impulses to your brain – they aren’t really hairs, but they sort of look like hairs. Different frequencies of sound resonate with hair cells at different points along that surface allowing you to hear different sounds. As you age, the hair cells start to die off. Those associated with the highest frequencies are the first to die, gradually extending the loss downward.

In addition to age-related hearing loss, let’s not forget about noise-induced hearing loss — damage to nerve cells and other structures caused by exposure to loud noises. Noise-induced hearing loss isn’t caused by aging, but because you are likely to have been exposed to more damaging noise levels the older you are, it correlates strongly with age.

All of this is why design recommendations suggest tones under 2,500 Hertz for alerts — they’re low enough that even someone with extreme presbycusis can hear them.

But of course, not all auditory interactions are simple beeps or tones that let you know something is wrong or needs attention. Speech interactions are becoming more common as technology advances, and when done well, spoken instructions can be comprehended faster than written instructions. Siri-enabled Apple iPhones exemplify this trend, but it goes far beyond Siri.

Most of the remaining design recommendations mentioned above relate to these types of interfaces. The first of these is to minimize background noise. Older adults have difficulty separating the noise they want to listen to from background noises. This is especially true for speech. Unfortunately, certain aspects of human speech fall within the range of frequencies that are lost with age. Higher frequency consonants — t, p, k, f, s, and ch — become lost as presbycusis advances, leading to a loss in speech clarity. As a result, older adults tend to have difficulty understanding speech even under the best of circumstances. With background noise, the noise is heard in place of the missing consonants, making speech comprehension even more difficult.

Other studies have shown that older adults have difficulty understanding synthetic speech when compared to natural or digitized speech. Digitized speech is natural speech that is recorded — it can be broken up and recombined to produce different phrases, depending on what was originally recorded. Synthesized speech, on the other hand, is computer-generated.

The final two recommendations — using natural language and minimizing the amount of information presented through the auditory channel – relate more to the memory and attention changes that take place as you age. They are often included in auditory design recommendations because spoken instructions require the listener to pay attention to and remember the instructions to a greater degree than those presented visually (because those presented visually serve as their own memory aid). But changes to memory and attention are topics of a future article… so stay tuned.

Featured Photo by Mark Paton on Unsplash

Ear Structures: This work by Cenveo is licensed under a Creative Commons Attribution 3.0 United States